Some Facts About Deepseek Chatgpt That May Make You are Feeling Better

페이지 정보

본문

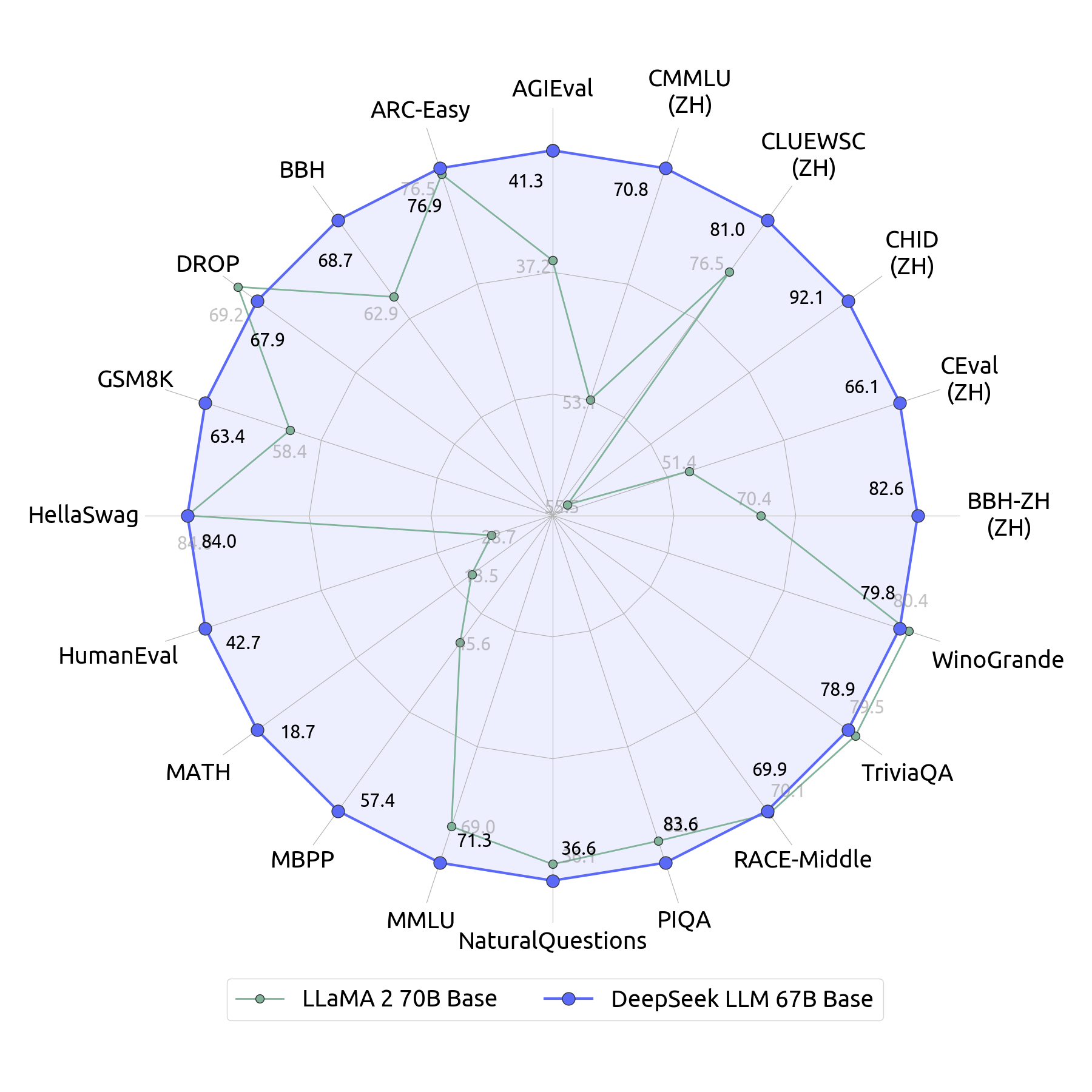

And even then, full funding apparently hasn’t been secured yet, and the government won’t be offering any. Amazon Haul is offering its deepest reductions yet, with some items reaching as much as 90% off through layered promotions, as Amazon continues aggressive subsidization despite the looming adjustments to the de minimis import threshold. Despite these issues, banning DeepSeek could possibly be difficult because it's open-supply. If it is now attainable-as DeepSeek has demonstrated-that smaller, less well funded rivals can comply with shut behind, delivering related performance at a fraction of the cost, those smaller companies will naturally peel customers away from the big three. On Jan. 20, 2025, DeepSeek launched its R1 LLM at a fraction of the associated fee that other distributors incurred in their own developments. DeepSeek LLM was the company's first normal-purpose massive language model. DeepSeek Coder was the company's first AI mannequin, designed for coding tasks. DeepSeek-Coder-V2 expanded the capabilities of the unique coding mannequin. Testing DeepSeek-Coder-V2 on numerous benchmarks shows that DeepSeek-Coder-V2 outperforms most models, including Chinese rivals. Nobody is aware of exactly how a lot the large American AI firms (OpenAI, Google, and Anthropic) spent to develop their highest performing models, however based on reporting Google invested between $30 million and $191 million to practice Gemini and OpenAI invested between $forty one million and $78 million to train GPT-4.

Below, we highlight efficiency benchmarks for every mannequin and present how they stack up against one another in key categories: arithmetic, coding, and common knowledge. One noticeable difference within the models is their basic information strengths. The other noticeable difference in costs is the pricing for every model. While OpenAI's o1 maintains a slight edge in coding and factual reasoning duties, DeepSeek-R1's open-source access and low prices are appealing to users. DeepSeek's pricing is significantly lower throughout the board, with enter and output costs a fraction of what OpenAI expenses for GPT-4o. Naomi Haefner, assistant professor of technology administration on the University of St. Gallen in Switzerland, stated the question of distillation could throw the notion that DeepSeek created its product for a fraction of the associated fee into doubt. The author is a professor emeritus of physics and astronomy at Seoul National University and a former president of SNU. White House Press Secretary Karoline Leavitt just lately confirmed that the National Security Council is investigating whether DeepSeek poses a potential national safety risk. Navy banned its personnel from using DeepSeek's applications on account of security and moral issues and uncertainties.

Below, we highlight efficiency benchmarks for every mannequin and present how they stack up against one another in key categories: arithmetic, coding, and common knowledge. One noticeable difference within the models is their basic information strengths. The other noticeable difference in costs is the pricing for every model. While OpenAI's o1 maintains a slight edge in coding and factual reasoning duties, DeepSeek-R1's open-source access and low prices are appealing to users. DeepSeek's pricing is significantly lower throughout the board, with enter and output costs a fraction of what OpenAI expenses for GPT-4o. Naomi Haefner, assistant professor of technology administration on the University of St. Gallen in Switzerland, stated the question of distillation could throw the notion that DeepSeek created its product for a fraction of the associated fee into doubt. The author is a professor emeritus of physics and astronomy at Seoul National University and a former president of SNU. White House Press Secretary Karoline Leavitt just lately confirmed that the National Security Council is investigating whether DeepSeek poses a potential national safety risk. Navy banned its personnel from using DeepSeek's applications on account of security and moral issues and uncertainties.

Trained using pure reinforcement learning, it competes with high models in complicated problem-fixing, particularly in mathematical reasoning. While R1-Zero is not a top-performing reasoning model, it does show reasoning capabilities by generating intermediate "thinking" steps, as shown within the figure above. This determine is significantly lower than the tons of of tens of millions (or billions) American tech giants spent creating different LLMs. With 67 billion parameters, it approached GPT-four stage performance and demonstrated DeepSeek's skill to compete with established AI giants in broad language understanding. It featured 236 billion parameters, a 128,000 token context window, and assist for 338 programming languages, to handle more complicated coding duties. The mannequin has 236 billion total parameters with 21 billion active, significantly improving inference effectivity and coaching economics. Thus it seemed that the path to building the most effective AI models on the earth was to speculate in additional computation throughout both coaching and inference. For instance, it's reported that OpenAI spent between $80 to $a hundred million on GPT-4 training. OpenAI's CEO, Sam Altman, has additionally stated that the cost was over $one hundred million. And final week, the company mentioned it launched a mannequin that rivals OpenAI’s ChatGPT and Meta’s (META) Llama 3.1 - and which rose to the highest of Apple’s (AAPL) App Store over the weekend.

Simply Deep seek for "DeepSeek" in your device's app retailer, install the app, and follow the on-screen prompts to create an account or register. On the chat page, you’ll be prompted to check in or create an account. Essentially the most simple way to entry DeepSeek chat is through their web interface. After signing up, you may access the total chat interface. Visit their homepage and click "Start Now" or go on to the chat page. For now although, knowledge centres typically depend on electricity grids that are sometimes heavily dependent on fossil fuels. These are all problems that might be solved in coming versions. Rate limits and restricted signups are making it onerous for folks to entry DeepSeek. But unlike the American AI giants, which normally have Free DeepSeek online variations but impose charges to entry their greater-working AI engines and gain more queries, DeepSeek is all free to use. They planned and invested, whereas the United States clung to a failed ideology: the assumption that Free DeepSeek Chat markets, left to their own gadgets, will save us. Will DeepSeek Get Banned Within the US? On December 26, the Chinese AI lab DeepSeek announced their v3 model.

- 이전글8 Tips To Enhance Your Motorbike Riding Game 25.02.28

- 다음글قانون العمل السوري 25.02.28

댓글목록

등록된 댓글이 없습니다.